I Thought “Generating a Report” Was the Hard Part

Until I Saw What Happens After

For a long time in my career, I believed report generation was the end of the story.

A user clicks Download Report.

The backend queries the database.

A file is generated.

The user gets it.

Done.

That mental model worked — until it didn’t.

The moment reports stopped being:

small

synchronous

user-triggered

single-consumer

Everything changed.

This blog is about that moment.

And about a flow that finally made sense to me:

.NET Application → Kafka → Ingestion Service → Shared Persistence / Blob Storage

At first, this flow looked unnecessarily complex.

Later, it felt inevitable.

When Reports Were Simple (and Why That Was Deceptive)

Early on, reports lived inside the application.

A controller would:

fetch data

format it

write a file

stream it back

It felt productive.

But over time, requirements grew quietly:

reports became large

generation took minutes

users wanted async processing

multiple teams wanted the same report

reports needed to be stored, audited, reprocessed

Suddenly, “generate and return” wasn’t enough.

That’s when the system started pushing back.

For content overview videos

https://www.youtube.com/@DotNetFullstackDev

The First Cracks: Reports Are Not Requests

The first realization was subtle but important:

A report is not a request-response problem.

A report is:

heavy

long-running

retryable

replayable

consumed by more than one party

Treating it like an HTTP request forces:

timeouts

retries from browsers

duplicated work

tight coupling between UI and backend

Once I accepted that, the architecture had to change.

Step 1: The .NET Application Stops “Doing” the Report

The biggest shift was this:

The .NET application stopped generating reports

and started declaring that a report should exist.

Instead of:

“Generate report now”

The app began to say:

“A report of type X, with parameters Y, is required.”

That declaration became an event, not a function call.

This is where Kafka entered the picture.

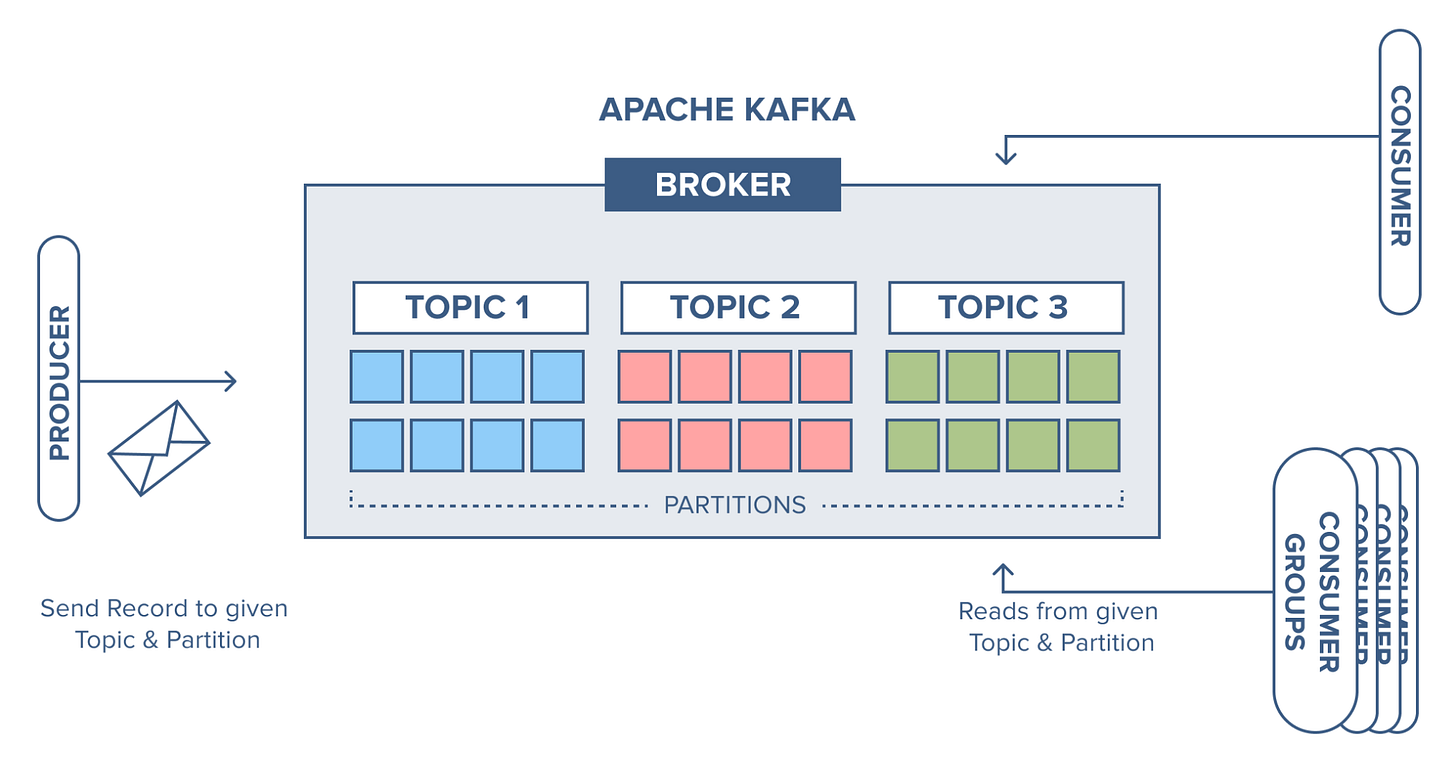

Why Kafka Was the Right Fit (Not a Queue, Not a Database)

At first glance, Kafka felt like overkill.

But then the nature of reports became clearer.

A report request:

is a fact (“this report was requested”)

may be processed later

may be processed multiple times

may be reprocessed if logic changes

must be auditable

Kafka fits because it treats events as records of truth, not tasks to discard.

The .NET app publishes something like:

“ReportRequested”

And then… it’s done.

No waiting.

No heavy lifting.

No assumption about who processes it.

That separation alone reduced stress in the codebase.

Keep the Momentum Going — Support the Journey

If this post helped you level up or added value to your day, feel free to fuel the next one — Buy Me a Coffee powers deeper breakdowns, real-world examples, and crisp technical storytelling.

What the .NET Application Is Responsible For (and What It Is Not)

This part matters.

The .NET application:

validates input

determines report intent

publishes the event to Kafka

returns immediately to the user

It does not:

fetch all report data

format files

write to storage

worry about retries or failures

This was uncomfortable at first.

Letting go of control always is.

But it made the app lighter, faster, and easier to reason about.

Kafka’s Role: The Unbiased Recorder

Kafka does not care about reports.

That’s its strength.

Kafka simply records:

report requested

with parameters

at this time

by this source

It doesn’t:

decide how to generate

care how long it takes

delete the message after consumption

That means:

multiple ingestion services can read the same request

failures don’t lose intent

logic can evolve without re-triggering users

Kafka became the memory of intent.

Enter the Ingestion Service: Where Work Actually Happens

This is where the real work lives.

The ingestion service is not a controller.

Not a UI.

Not a database.

It’s a specialized worker whose only responsibility is:

“Turn report intent into report artifacts.”

It:

consumes report events from Kafka

fetches data from multiple sources

applies business rules

formats output (CSV, PDF, Excel, JSON)

handles retries and partial failures

This service is allowed to be:

slow

CPU-heavy

memory-heavy

Because it’s isolated.

Failures here don’t take down the user-facing app.

That isolation was the biggest architectural win.

Why Ingestion Is a Separate Service (and Not Just a Background Job)

At some point I asked:

“Why not just run this as a background job in the same app?”

The answer came from experience:

Because ingestion:

scales differently

fails differently

evolves differently

needs different observability

has different SLAs

Once reports were critical, ingestion became a system, not a helper.

Separating it allowed:

independent scaling

independent deployments

independent retry strategies

And that independence saved us more than once.

Shared Persistence: The Final Destination

After generation, reports need a home.

Not inside memory.

Not inside the ingestion service.

Not inside Kafka.

They need shared persistence.

This is where blob storage (or shared file systems) comes in.

Blob storage is ideal because:

it’s durable

it’s cheap

it’s scalable

it’s accessible by multiple consumers

it decouples generation from consumption

Once a report is written to blob storage:

users can download it later

other services can process it

analytics jobs can read it

retention policies can apply

The report becomes a first-class artifact, not a temporary output.

The Flow, End to End (How It Feels in Practice)

Here’s how the system feels when designed this way:

User requests a report

.NET app validates and publishes intent to Kafka

Kafka records the request

Ingestion service consumes when ready

Report is generated asynchronously

Output is written to shared storage

Status updates can be published back if needed

No blocking.

No guessing.

No hidden coupling.

Just a clean flow of responsibility.

👉 I’ve shared the JWT Authentication Boilerplate for ASP.NET Core (.NET 8)

(Instant download, production-ready, no fluff)

The Biggest Mental Shift This Flow Taught Me

This architecture taught me something important:

Systems work better when intent, processing, and storage are separate concerns.

Trying to collapse them into one layer feels simpler —

until scale, failures, and change arrive.

Then separation stops feeling like ceremony

and starts feeling like relief.

Why This Design Ages Well

This flow survives change because:

report formats can change without UI changes

ingestion logic can evolve independently

storage policies can change without regeneration

new consumers can read old events

reprocessing is possible without user involvement

That’s not over-engineering.

That’s designing for time.

Final Thought

At first, this architecture feels indirect.

Why not just generate the report?

But once you live with it, you realize:

The complexity was always there.

You just moved it to the right places.

When the .NET app stops being a factory

and starts being a coordinator,

everything becomes calmer.

And calm systems are the ones that survive.