I Thought foreach Was Enough Until My Code Started Waiting… and Waiting… and Waiting

Early in my .NET career, I had a simple belief:

If I have a collection, I loop it.

foreach (var item in items)

{

Process(item);

}It was clean.

Predictable.

Easy to reason about.

And for a long time… it was enough.

Until one day, it wasn’t.

https://www.youtube.com/@DotNetFullstackDev

The First Time “Correct” Code Felt Slow

I remember working on a feature that processed a list of files.

Nothing fancy:

read file

transform data

save output

The code looked fine.

But when the dataset grew, something changed.

It didn’t break.

It just… took forever.

That’s when I first felt the difference between:

code that works

and

code that works under pressure

The Naive Thought: “Let’s Make It Parallel”

The obvious idea came next:

“Why not process multiple items at once?”

That’s when I discovered:

Parallel.ForEach(items, item =>

{

Process(item);

});And it felt like magic.

The same logic.

Faster execution.

Problem solved.

At least, that’s what I thought.

When Parallelism Started Breaking Things

Very quickly, subtle issues appeared:

Random exceptions

Data corruption

Race conditions

Inconsistent results

The code didn’t look dangerous.

But it was.

Because I had unknowingly crossed a boundary:

I moved from sequential thinking to concurrent thinking

without changing how I designed my code.

That’s when I realized something uncomfortable:

Parallelism is not just about speed.

It’s about control.

Why foreach Exists (and Why It Still Matters)

Let’s go back for a second.

foreach is not “basic”.

It’s safe by design.

foreach (var item in items)

{

Process(item);

}It guarantees:

one item at a time

predictable order

no shared state conflicts (by default)

It’s slow for large workloads, yes.

But it’s calm.

And calm code is easy to trust.

What Parallel.ForEach Really Introduced

Parallel.ForEach didn’t just introduce speed.

It introduced:

multiple threads

non-deterministic execution order

shared state risks

scheduling complexity

Parallel.ForEach(items, item =>

{

Process(item);

});Now:

items may run simultaneously

order is not guaranteed

multiple threads can touch the same data

This is where design begins to matter more than syntax.

The First Real Problem: Shared State

Here’s a classic mistake I made:

int total = 0;

Parallel.ForEach(items, item =>

{

total += item.Value;

});Looks innocent.

But it’s broken.

Multiple threads updating total at the same time leads to:

lost updates

incorrect results

This is where I first encountered the need for thread-safe constructs.

Enter ConcurrentDictionary — The Controlled Shared State

When multiple threads need to share data, normal collections fail.

That’s where ConcurrentDictionary comes in.

var dict = new ConcurrentDictionary<int, string>();

Parallel.ForEach(items, item =>

{

dict.TryAdd(item.Id, item.Name);

});Why this works:

internal locking or lock-free strategies

atomic operations

safe concurrent access

But here’s the deeper realization:

We didn’t just add a new class.

We added discipline to shared data.

But Even That Wasn’t Enough

At some point, I ran into a different problem.

Not just:

“how do I process items faster?”

But:

“how do I coordinate producers and consumers?”

Because now:

one part of the system generates work

another part processes it

And they don’t move at the same speed.

That’s when BlockingCollection entered my world.

BlockingCollection — When Flow Matters More Than Speed

BlockingCollection is not about parallel loops.

It’s about controlled pipelines.

var collection = new BlockingCollection<int>();

// Producer

Task.Run(() =>

{

foreach (var item in items)

{

collection.Add(item);

}

collection.CompleteAdding();

});

// Consumer

foreach (var item in collection.GetConsumingEnumerable())

{

Process(item);

}What changed here?

producer and consumer are decoupled

consumer waits when no data is available

producer slows down if buffer is full

This is not just concurrency.

This is flow control.

That’s When It Clicked for Me

All these constructs are not random.

They exist because problems evolved.

Let’s map that evolution:

The “Family” (Once It Finally Made Sense)

🟢 foreach

simple iteration

single-threaded

predictable

safe

Used when:

workload is small

order matters

simplicity is priority

🟡 Parallel.ForEach

multi-threaded processing

CPU utilization

faster execution

Used when:

work is independent

no shared state

CPU-bound tasks

🔵 ConcurrentDictionary (and other concurrent collections)

safe shared state

atomic operations

Used when:

threads must share data

consistency matters

Also part of this group:

ConcurrentQueueConcurrentBagConcurrentStack

🟣 BlockingCollection

producer-consumer coordination

backpressure handling

Used when:

pipeline systems

ingestion/processing flows

uneven workloads

And It Still Didn’t End There…

Once I went deeper, I found more tools in this ecosystem:

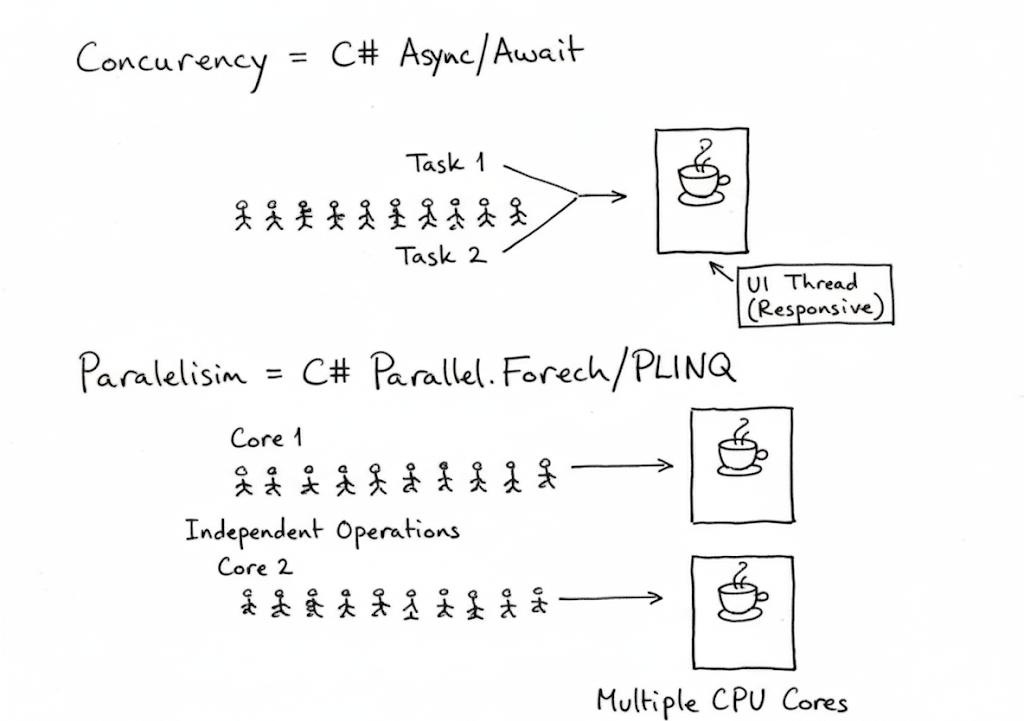

🔶 Task.WhenAll (async parallelism)

await Task.WhenAll(items.Select(item => ProcessAsync(item)));This is:

asynchronous parallelism

better for IO-bound tasks

Not the same as Parallel.ForEach.

🔷 Parallel LINQ (PLINQ)

var results = items

.AsParallel()

.Select(x => Process(x))

.ToList();A more declarative way of parallelism.

🔶 Channels (System.Threading.Channels)

Modern alternative to BlockingCollection.

More control.

More performance.

🔷 TPL Dataflow (advanced pipelines)

For complex systems:

multi-stage processing

batching

throttling

So… Why So Many?

This question bothered me for a long time:

“Why doesn’t .NET just give one way to do this?”

Because the problem is not one-dimensional.

Each tool solves a different kind of pain

The Real Answer (The One That Stayed With Me)

This is what finally made everything feel calm:

Concurrency is not a feature.

It’s a set of trade-offs.

Every new construct exists because:

the previous one was not enough

or was too dangerous in certain situations

What Changed in My Thinking

I stopped asking:

“Which one is better?”

And started asking:

“What problem am I actually solving?”

Because:

using

Parallel.ForEachfor IO work is wrongusing

Task.WhenAllfor CPU-heavy loops is inefficientusing shared state without concurrent collections is dangerous

using concurrency when not needed is unnecessary complexity

Final Thought

At first, this ecosystem feels overwhelming.

Too many options.

Too many APIs.

Too many patterns.

But over time, something shifts.

You stop seeing:

different classes

And start seeing:

different responsibilities

And that’s when everything becomes simpler.

If You Remember One Thing

Start simple.

Add concurrency only when pain demands it.

And when you add it, choose the tool that matches the problem — not the trend.

That’s how this evolution makes sense.